Jacob Schaal: “Let companies profit from training graduates”

AI disparately impacts job employees for graduates. A share-income fee could incentivize companies to invest in training.

Jacob Schaal is a Researcher at King’s College London and ERA Cambridge where he focuses on the impact of AI on labor markets. He is one of the co-editors of the newsletter “AI Economics Brief”, published by Windfall Trust, a UK-based nonprofit organization that focused on guiding societies through the upcoming AI economic transformation.

Work/Code: When we started Work/Code, our working assumption was that AI will change white collar work in the same way the steam engine and electricity changed blue collar work. Where are we currently on this journey from your perspective?

Jacob Schaal: We are still at the beginning, but we can certainly see early warning signs. So far, economists have generally looked at how much a job is exposed to AI. But more recent research suggests that just measuring AI exposure is probably not the right metric. Instead, we have to differentiate between automation that lowers the value of expertise and that which increases it.

On some subgroups like entry-level roles, we already have more evidence. Three studies from researchers at Harvard, Stanford, and King’s College were able to show that the slow-down in graduate hiring cannot be solely explained by macroeconomic factors like interest rates. This pattern is called seniority-biased technological change – unlike previous waves of automation that rewarded education, AI rewards experience and tacit knowledge. In related work at Cambridge ERA, I’ve classified over 19,000 occupational tasks by their susceptibility to AI automation, including tacit knowledge.

You mentioned there’s an uneven impact of AI on the workforce. Can you expand on that?

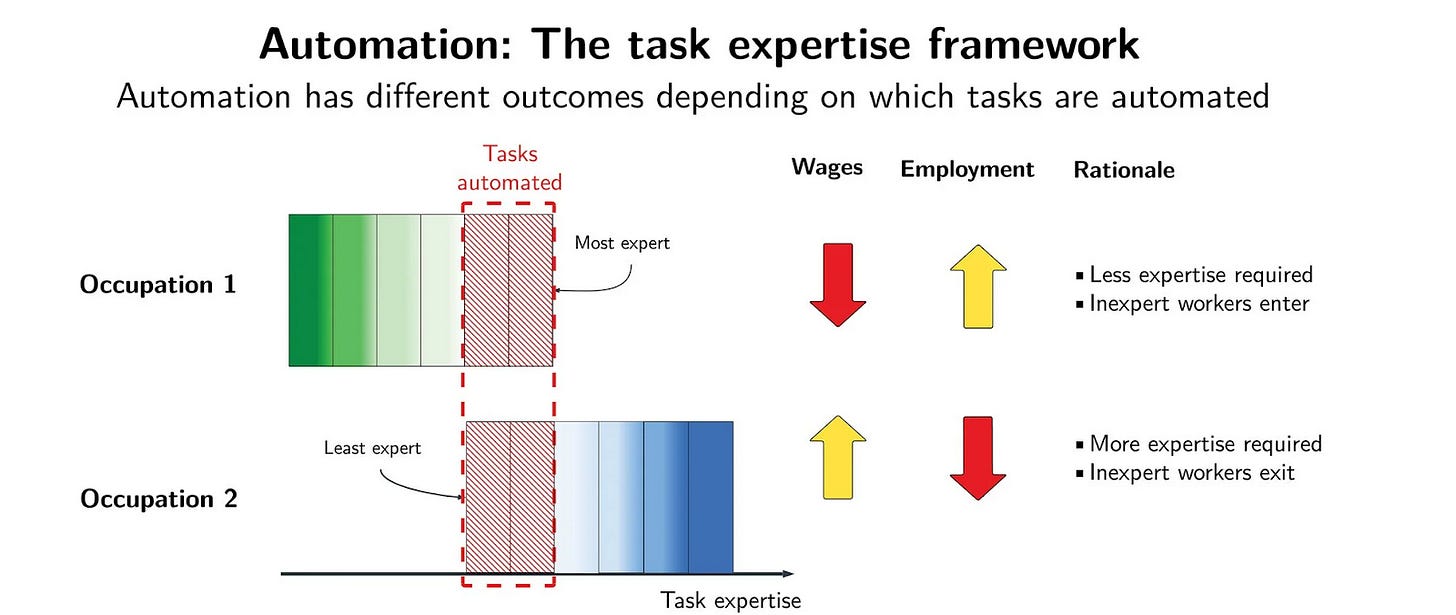

Of course. Last year, MIT economist David Autor and his co-author published a new framework that explains why the level of expertise required in a given task matters a lot: Each job has a distribution of tasks that require different levels of expertise. When AI automates the lower part of that distribution, the expertise bar rises and employment in these jobs falls since fewer people are qualified to do the job while wages rise. Likewise, if AI automates the higher-skilled part of the job, there’s more employment but lower wages.

A common example for this is GPS, which led to a drop in barriers to entry for taxi drivers because they no longer had to memorize street maps – a high-skilled task. On the other hand, accountants may benefit from automation because while a machine could take over some of the grunt work of an accountant, higher-value work would still require a human and they would be able to charge higher rates for this work, correct?

Yes, that’s roughly what the theory suggests.

Are there estimates about the percentage of roles in industrialized countries like Germany that tend towards automation compared to specialization?

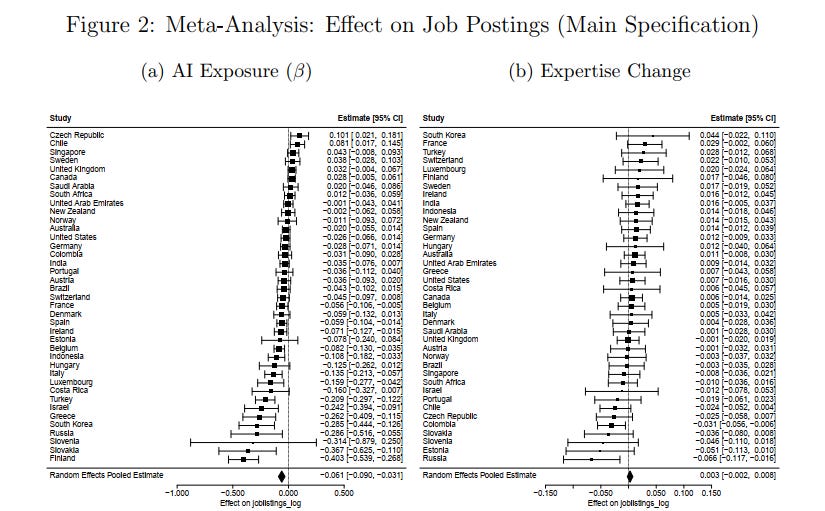

That’s a good question. A recent study examined job postings across all OECD countries and estimated the effect of AI on them. Following the launch of ChatGPT, job postings in Germany were reduced, but not significantly. Overall, 31 of 39 countries had negative point estimates and 14 were significant, so something is going on.

You mentioned the impact of AI on the graduate labour market. Many economists now think that broader macroeconomic factors are better suited than AI to explain the slowdown of graduate hiring. But you’re saying you can already see an impact of AI specifically on the graduate job market. Can you expand on where you see this?

The original Stanford “Canaries in the coal mine” paper on this topic was perhaps less clear methodologically so you could argue that macroeconomic effects were involved. But the authors published a revised study a few weeks ago showing that AI’s impact on the labour market only started in 2024, not in 2022.

And we see broad evidence from the above mentioned Harvard and King’s College studies as well, which use job-posting data and control for macroeconomic effects that affect all workers inside a firm equally. These studies, the latter of which I’ve worked on myself, show that AI-exposed jobs see less hiring and I’m fairly confident we see some effect of this on young workers because it is easier for firms to postpone hiring than laying off the existing workforce.

One long-term concern of mine is that this threatens the transmission of knowledge, since early-career workers are the managers of tomorrow. The first few years used to function as a training programme, but that’s much less attractive for employers now.

IBM just announced they would triple graduate hiring. And firms like Notion hire for roles specifically focused on designing graduate programmes that give broad exposure across different parts of the firm, rather than deep specialisation. This seems to suggest that a T-shaped career model – deep expertise in one area and additional knowledge in adjacent skills – might be the future. Do you think companies like IBM are on a path to solving the AI dilemma for graduates?

It’s good that some companies like IBM are thinking about this, and bigger firms are more likely to invest resources into graduate programmes like the ones you describe. But these are just anecdotes so far. Additionally, there are many smaller employers in Germany, and I am less confident that they will follow suit quickly.

This also connects to our current – still to be published – research project, where we look at how AI changes task composition in job postings. For software engineers in the UK, for example, we already see less demand in coding skills and more demand for writing and management skills. This shows that we definitely need to rethink how we train young people.

One way to incentivise companies to hire and train graduates are income share agreements. Under a scheme I developed in a recent policy paper, firms that hire workers within their first five years of entering the labour force would receive one per cent of those workers’ gross earnings for 25 years, regardless of where they subsequently work. The share is proportional: employing someone for two of their first five years yields 40 per cent of the payment stream. For a worker earning £ 40,000 annually, that amounts to roughly £ 6,600 in present value, which is a meaningful incentive to hire and develop juniors without requiring any government spending. Because firms retain this stake even after the worker leaves, the poaching externality that has always discouraged training investment is directly addressed. Organisations like Chancen eG in Germany already deploy similar models in education financing, and the scheme could operate through the existing tax infrastructure system without new bureaucracy.

Unlike the China shock, which hit a limited number of industries and regions in a relatively short period of time, AI is more diffuse. It affects a broad range of jobs across sectors and at different speeds. Does the diffuse impact of AI on labor means we are more likely to sleepwalk into an AI job apocalypse?

The diffuse nature does make it harder to respond politically. Policymakers would react more strongly if there was a big, obvious shock to the labour market. But in general, Germany is behind. The US AI Action Plan has an extensive workforce section, and the UK has set up new institutional responses. So there is actually a lot more that the government could do.

What are the metrics you look at to describe the impact of AI and work and which data points are missing in your view?

The Anthropic Economic Index is quite good, although biased towards certain roles because it is based only on Claude users. The challenge is that most exposure metrics rely on LLMs to classify whether a technology affects certain labour markets which can be imprecise.

We’re now working with a large UK job postings dataset to build a dynamic picture, using text analysis to track how technologies change job requirements over time. Software engineers are the first case we’re working through. But beyond that, particularly for young professionals, the datasets are still quite limited, so we are really flying half-blind here.

We’ve been talking mainly about the impact on graduates, partly because the assumption is that large language models are best at tasks that recent graduates do. But a trend we’re now seeing is that AI labs are increasingly investing in real-world training environments in order to train models on tasks that previously only very senior professionals could do. Could this lead to a much broader scope of automation than we currently think?

The question is how much of that expert knowledge can really be captured and how much remains tacit. I wouldn’t say it’s impossible to automate all jobs, but at least for now, senior professionals are a bit more protected.

This could however also be an opportunity for Germany. By leaning into “reinforcement learning as-a-service”, German firms could train open-source models on their own proprietary industrial data. That could be a model for retaining value-creation in Europe as third country frontier models dominate the basic layer.

That connects to the broader question of what German policymakers should do. Some argue that Europe needs its own foundation models. Others suggest that adoption matters more. Economists like Jeffrey Ding argue that diffusion of technology often benefits countries that were not the inventors. Is it important for Germany and Europe to lead in foundation model development, or is being a fast adopter good enough?

It’s unrealistic to try to compete with the US at the frontier, although it’s important to have some backup options and open-source alternatives.

Likewise, having compute power available locally to run open-source models especially for government use is important.

But look at the Second Industrial Revolution: Electricity was pioneered in America, but Zeiss, Bosch, and Siemens were the ones who adopted it most effectively in industrial processes.

So I would say the focus should be on adoption and on retaining value through that adoption rather than on frontier development.

You’re affiliated with the Windfall Trust, a nonprofit organization that focuses on the societal implications of AI and you recently gathered a group of policymakers and academics in London. What did you learn and what do you think the most important policy implications are from a European perspective?

Looking at the broader UK policy landscape, three things stand out that Germany could learn from:

First, the UK government has established a dedicated Future of Work Unit, while Germany has no equivalent institutional home for this issue.

Second, the US Bureau of Labor Statistics has been mandated under the AI Action Plan to collect AI-specific labour market data. Germany’s statistical infrastructure does not yet capture AI adoption data.

Third, scenario planning – testing policy responses against different AI development trajectories – is gaining traction among UK policymakers as a way to prepare for disruption before it becomes a crisis. Germany tends to wait for coalition treaty mandates before acting on emerging challenges. On AI and the labour market, that approach risks being too slow.

The seniority-biased technological change framework explains a lot. When AI automates entry level tasks, the bar rises and fewer people can enter. But those who do get more interesting work. The challenge is how people get to that first rung.